If you own a website and are obsessed with your website being search engine friendly you will probably be aware of a file called robots.txt which often has the site-map to your website. This site-map is immediately picked up by search engines web crawlers which is how search engines end up indexing billions of web pages.

What does robots.txt file do?

- A web robot or web crawler which first visits a website it looks up for the robots.txt files. For example when a search crawler looks up https://devilsworkshop.org it will look up for https://devilsworkshop.org/robots.txt.

- This robots.txt actually allows and disallows a search engine to look up certain directories of a domain. For instance if you website has hosted a wiki you do not want to be accessible for search engines to look up, then that directory has to be excluded from looking up by a search engine.

This is why is it necessary to check robots.txt file for typos and errors which might defeat the purpose of excluding certain directories on your website from being looked up.

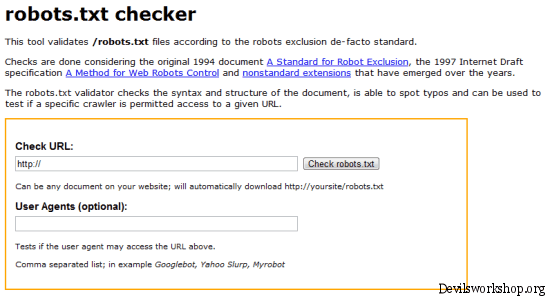

About Robots.txt checker

- The website allows you to enter any URL and check the robots.txt file. This scan is usually looks up errors based on what is disallowed.

- It also shows up warning for any matches what might be misunderstood or ignored by search engine crawlers.

This is not really something that I would recommend webmasters to obsess over as usually most robots.txt of many websites are similar. For example you will see identical robots.txt files for almost all generic blogs which are hosted with WordPress. This tool will be useful mainly if you have many directories which you want not to be looked up by web bots.

You might like to look up how to look up sites and do manual web crawling.

Do try out this web service and let me know what you views on it by dropping in a comment.

Link: Robots.txt Checker

3 Comments

Where is the link..btw ??

Opps! Thanks for pointing that out to me, it is fixed now.

Had a look at it, as you have pointed out, it shows warning on both allowed as well as disallowed. It showed warning on allow google ads (which is actually right), i guess the right way to check robots.txt is the webmaster tool, where it shows the number of crawl errors and what caused it.